The thinking agent

Every AI agent framework today is obsessed with doing. Write this email. Scrape this page. Deploy this code. Generate this report.

Nobody's building agents that think.

I don't mean "think" as in chain-of-thought reasoning before answering a question. I mean agents that proactively analyze the state of your business, notice patterns you're missing, connect dots across domains, and surface strategic insights, proactively.

The human bottleneck isn't doing

Here's the real problem with running a business (or, honestly, running a life): the bottleneck isn't execution. It's attention.

As a human, you can only think about one thing at a time. That's what "focus" really means, you chose to think about this, which means you chose not to think about everything else. Every thought is an opportunity cost.

I run 25+ SaaS products. I have agents handling SEO, content generation, CRM updates, customer support. The doing is largely handled. But the thinking? That's still serial. I can deeply analyze one product's trajectory, but while I'm doing that, another product might be quietly losing users. A deal might be going cold in the pipeline. My sleep might be degrading in a pattern that predicts burnout.

I don't miss these things because I can't act on them. I miss them because I never thought about them in the first place.

Agents can think in parallel. You can't.

This is the fundamental asymmetry. For a human, thinking about one thing means not thinking about another. For an agent, that constraint doesn't exist. You can have the same agent (or multiple agents) running concurrent thinking streams across every domain that matters to you.

Not alerts. Not dashboards. Thinking.

The difference matters. An alert says: "Revenue dropped below $X." That's threshold-based and single-domain. Thinking says: "Revenue dropped, and it correlates with the pricing change your team made last week, and there are 3 support tickets from confused customers, and your competitor just launched a free tier." That requires cross-domain context, historical awareness, and judgment.

What I built: Wayne's Thinking System

Wayne is my AI CEO. He already had access to every tool in my infrastructure: CRM, calendar, codebase, financials, email, health data. But he only thought when spoken to. I'd ask a question, he'd gather context, he'd answer.

Now he thinks proactively.

Four thinking streams

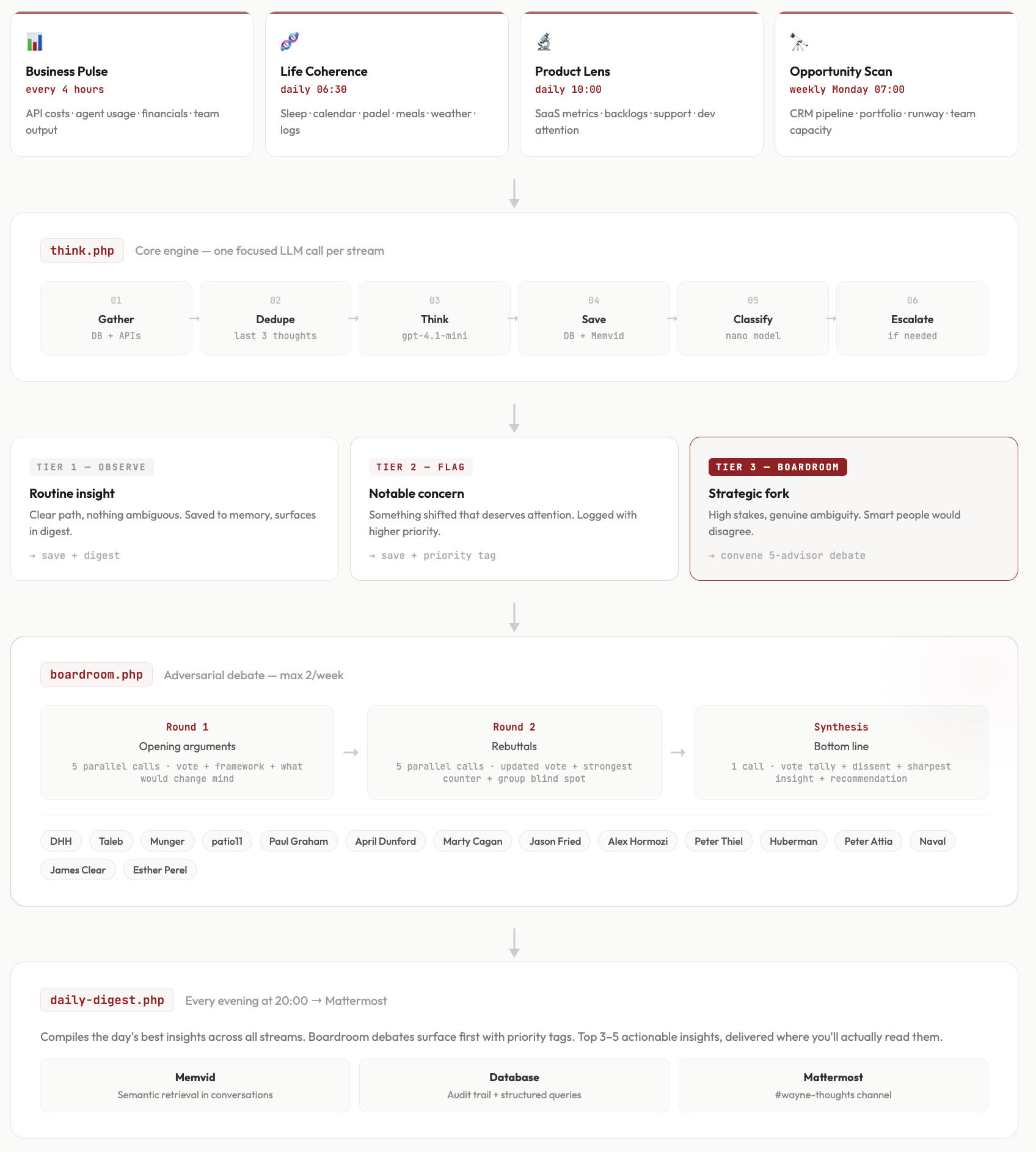

The system runs four parallel streams on a schedule:

- Business Pulse runs every 4 hours. It pulls API costs, agent utilization, financial transactions, team output. It's looking for cost spikes, utilization anomalies, and financial health indicators.

- Life Coherence runs daily at 6:30 AM. It looks at my sleep data (7-day trends), calendar, gym and padel activity, meals, weather, and life logs. It's connecting dots: "Your sleep is trending down and you have a high-stakes meeting Thursday."

- Product Lens runs daily at 10:00 AM. It reviews all SaaS products, support patterns, development attention distribution, and backlog health. It's asking: which products are being neglected? Where is effort misallocated?

- Opportunity Scan runs weekly on Monday at 7:00 AM. It digs into the CRM pipeline, portfolio strategy, team utilization, and financial runway. It's looking for the one big move we should be making.

Each stream doesn't just fetch data. It interprets what changed since last time and whether it matters. The prompt is constrained but open-ended: "Given everything you see in this domain right now, what's worth noticing?"

To prevent repetitive insights, each run gets the last three thoughts from the same stream with the instruction "don't repeat unless data changed." This forces the thinking to evolve.

Three tiers of escalation

Every thought gets classified by a quick nano-model call:

- Observe: routine insight, clear path. Gets saved to memory, surfaces in the nightly digest.

- Flag: notable concern, needs attention. Logged with higher priority.

- Boardroom: high-stakes strategic fork where smart people would genuinely disagree on the right path. This triggers something more powerful.

The Boardroom: adversarial thinking on autopilot

When Wayne identifies a genuine strategic fork, not just a concern, but an ambiguous decision where the right answer isn't obvious.. he escalates to a virtual boardroom.

The boardroom simulates a structured debate among 5 advisors. These aren't generic personas. They're modeled on real thinkers whose frameworks I've studied and admire, each bringing a specific lens:

- DHH for bootstrapping, profitability, simplicity

- Nassim Taleb for antifragility, risk, optionality

- Charlie Munger for inversion, incentives, mental models

- Patrick McKenzie for value-based pricing, B2B economics

- Paul Graham for focus, taste, doing fewer things better

(I have 15 advisors in the pool, with different boards assigned to different domains. Health decisions get Huberman, Attia, and Naval. Product decisions get PG, Cagan, and Thiel.)

How the debate works

Round 1: Each advisor gets the question, Wayne's analysis, and business context. They provide a vote (YES/NO/CONDITIONAL), their core argument using their specific framework, what most people get wrong about this, and what would change their mind. These run in parallel.

Round 2: Each advisor reads all Round 1 arguments. They can update their vote, identify the strongest counterargument they heard, and flag what the group is missing. This is where minds change.

Synthesis: A final pass compiles the vote tally, biggest disagreements, who changed their mind and why, the sharpest insight, and which dissenting opinion deserves serious consideration.

The entire debate costs about 4 cents and runs automatically. I wake up to a strategic recommendation that's been adversarially stress-tested from five different philosophical angles.

First results

Here's what the first full run produced:

The Opportunity Scan found 77 deals in the CRM, with 60+ stuck in "To Process" with zero follow-ups. Only 7 were in closing stage. It didn't just report this, it recommended a "Dead Deal Resurrection" sprint: call 20 qualified leads, demo, convert at least 5 to paid trials by Friday.

The Business Pulse caught an API cost spike on February 18-19 that had already resolved by the 20th. Without proactive monitoring, I might never have noticed, or noticed too late.

The Product Lens identified that one of my products, Treendly, has an active but growing backlog while another product (Leadhall.com) is stalling despite being a pipeline focus. It connected these: attention is misallocated.

The Daily Digest compiled these at 8 PM into a 5-point briefing that reads like a note from a thoughtful CEO:

Pipeline cleanup, cost spike review. Top priority: Clean and activate the sales pipeline now while investigating API cost spikes.

Cost

The entire thinking system (i.e. four streams, classification, nightly digest) costs approximately $0.02 per day. A boardroom debate, when triggered, adds about $0.04. That's roughly $8 per year for continuous proactive strategic thinking across your business and life.

For comparison, a CEO, or a fractional COO costs $5,000–$15,000 per month. A board of advisors costs equity or fees. This costs less than a cup of coffee per year.

What accumulates over time

The real value isn't any single insight. It's the accumulation.

After a month, Wayne will have generated roughly 180 business pulse observations, 30 life coherence analyses, 30 product reviews, 4 strategic opportunity scans, and several boardroom debates. All stored, searchable, and semantically retrievable.

When I sit down for quarterly planning, Wayne doesn't start from scratch. He has 90 days of continuous observation with judgment. He can say: "Here are the three strategic questions that kept surfacing, here's how the board debated them, and here's what actually happened after each recommendation."

That's not a dashboard. That's institutional memory with opinions.

Why this changes the agent landscape

Every agent framework is racing to build better doers: more reliable tool use, longer context windows, multi-step execution. That's important work. But it's solving half the problem.

The other half is judgment under uncertainty, continuous situation awareness, and proactive pattern recognition. Not "what should I do with this task?" but "what should I be paying attention to that I'm not?"

Thinking agents don't replace doing agents. They direct them. The thinking layer identifies what matters; the doing layer executes. Without thinking, your doing agents are optimizing locally while missing the global picture.

Build this yourself

The architecture is simpler than you'd expect:

- Scheduled streams: cron jobs that gather domain-specific data and make one focused LLM call each

- Dual storage: database for structured queries and audit trail, vector memory for semantic retrieval in conversations

- Classification: one nano-model call per thought to determine escalation tier

- Boardroom: parallel advisor calls with adversarial debate structure, rate-limited to avoid noise

- Digest: nightly compilation of best insights, posted where you'll actually see it

The thinking prompts are constrained but open: specific domain scope, recent data window, rotating analytical lenses (anomaly detection, risk scanning, opportunity finding, coherence checking). The anti-repetition mechanism feeds back recent thoughts to prevent the same insight from surfacing repeatedly.

You don't need a complex framework. You need a scheduler, an LLM, a database, and the discipline to let your agent think about things you haven't asked about yet.

The paradigm shift

We've been building AI systems that answer questions. The next step is AI systems that ask them.

Not reactively, in response to a prompt. Proactively, because they've been watching, connecting, and reflecting, and they noticed something you haven't.

The human mind is a serial processor pretending to be parallel. We switch contexts, we forget things, we get absorbed by the urgent at the expense of the important. We're bottlenecked not by capability but by attention.

Agents don't have that bottleneck. It's time we used them accordingly.